- Incorta Community

- Community Hub

- Incorta Conundrums

- Challenge #1 Employees transition between departme...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-18-2022 08:06 AM

In an organization for software development, some employees make a decision to move to another department to gain more experience and help in the implementation and improvement processes,

As an end user, I need to track and have a complete picture of the flow between the organization’s departments

Can you help me get this data insight??

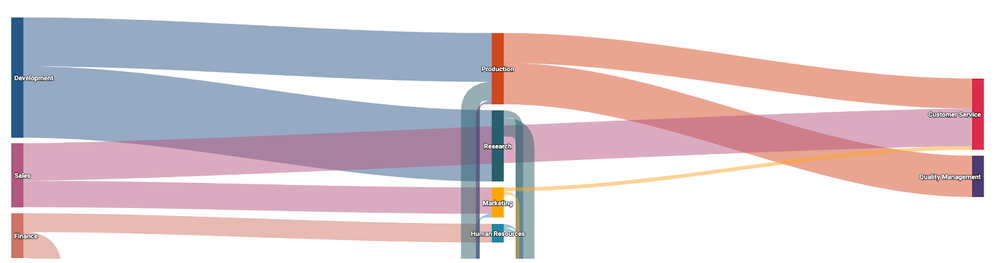

I would like to see a Sankey chart showing the movement of the employees between departments in an organization based on the employees' count I believe it should be something like this:

You can use the below-attached data sets files:

1-employees.csv

2-department.csv

3-dept_emp.csv

Also, you can check this article as an example for the Sankey chart

https://docs.incorta.com/5.2/references-visualizations-sankey#sankey-insight-example

Please try it, and attach here the outputs(schema and the dashboard) I Hope you enjoy it 😊.

Solved! Go to Solution.

- Labels:

-

Advanced

-

Business Logic

-

Insights

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-22-2022 10:35 AM - edited 11-23-2022 02:58 AM

Here is my output.

- Upload the CSV files, create a schema and create a table from each one.

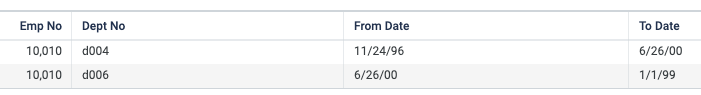

- Create Materialized View (MV) that generates all the employee transitions.

- Create another table that shows the total number of employees that moved from one department to another (EmployeeTransitionSummary)

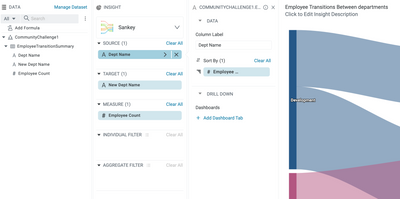

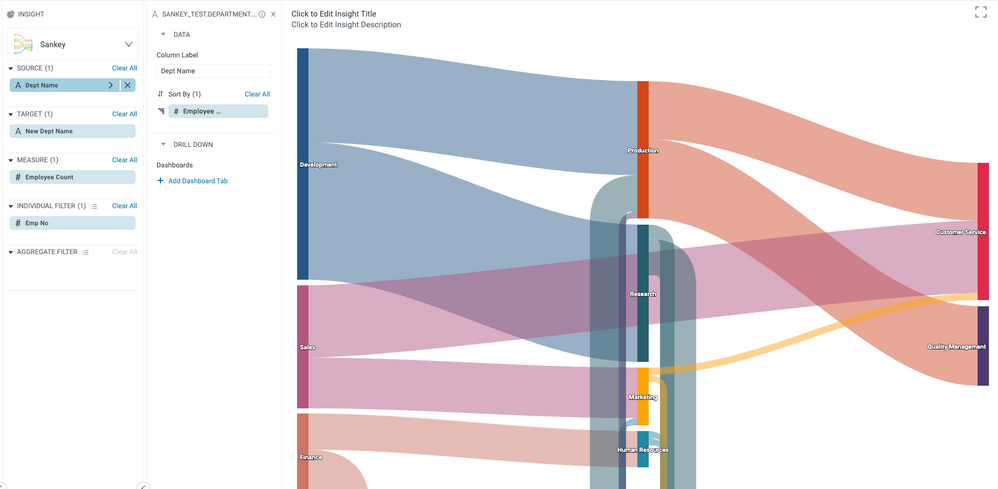

- Create a new insight, and use Dept Name as the source, New Dept Name as the target, and Employee Count as the measure. Optionally sort the source field by Employee Count. (We can also use Insight over Insight instead of creating a separate table for EmployeeTransitionSummary)

Now for the Materialized View in step#2, here is the PySpark script for EmployeeTransitions.

from pyspark.sql.window import Window

from pyspark.sql.functions import lead

dept = read('CommunityChallenge1.department')

dept_emp = read('CommunityChallenge1.dept_emp').withColumnRenamed('dept_no', 'employee_dept_no')

# join with department table to include department name

dept_emp = dept_emp.join(dept, dept.dept_no == dept_emp.employee_dept_no).drop('employee_dept_no')

# partition by the employee number and order by start date "from_date"

windowSpec = Window.partitionBy("emp_no").orderBy("from_date")

# use lead function to get the "next" department name

transitions = dept_emp.withColumn('new_dept_name', lead("dept_name", 1).over(windowSpec))

transitions = transitions.filter(transitions.new_dept_name.isNotNull()).sort('emp_no')

save(transitions)

You can learn more about windows functions in PySpark here.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

11-23-2022 02:01 PM

I am solving this challenge using the power of Incorta's no-code/low-code capabilities.

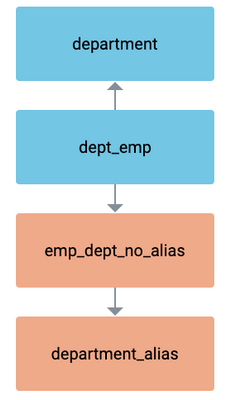

1.) Upload the CSV files, create a schema and create tables for the department.csv and dept_emp.csv files.

2.) Create aliases of department and dept_emp.

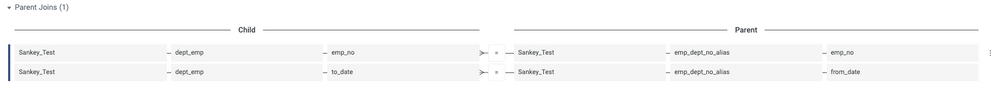

3.) Join dept_emp to the department on the dept_no. Join dept_emp to its alias with the below joins. Join the dept_emp alias to the department alias on dept_no.

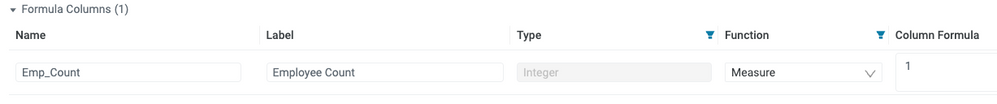

4.) Add a Formula Columns to the dept_emp table with the Column Formula of 1. We are creating this because at this time, the Sankey only supports sums and not counts.

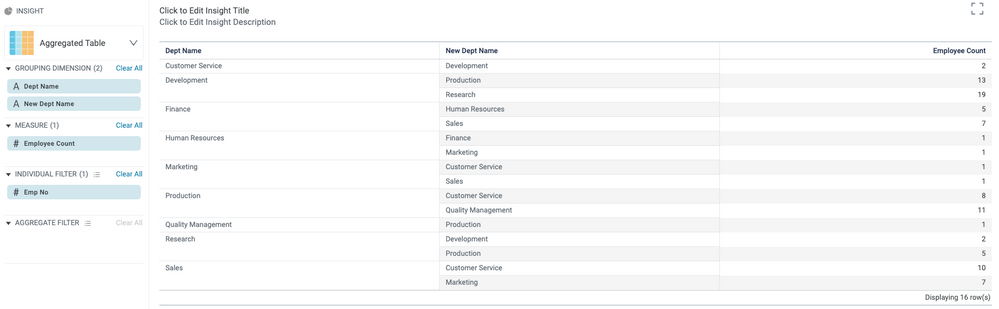

5.) Create an Aggregate Table insight. Put the Dept Name from the department table in the Grouping Dimension. Put the Dept Name from the department alias table in the Grouping Dimension below the other Dept Name, I recommend renaming it not to confuse the two. The formula column you created should be in the measure. To filter only on transfers, grab any column from the dept_emp alias table, place it in the Individual Filter, and only show Not Null records.

6.) Change the Insight into a Sankey and add the formula column you created as the Sort By of the Dept Name in the Source.

Here is the diagram of my Sankey schema for this challenge.

The join between the dept_emp and alias of dept_emp only works because the to date from the previous department matches the from date of the new department. If this was different we would have to create a different solution using Incortas First Version filter or SQL/Pyspark.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-26-2023 06:33 PM

Here is a video demonstration of the solution @john_cross provided above. Hope this is helpful!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

01-27-2023 07:47 AM

Awesome video! Thanks @LukeG !