- Incorta Community

- Knowledge

- Data & Schemas Knowledgebase

- Modeling Schemas, Tables, and Joins

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Article History

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

on

03-16-2022

10:20 AM

- edited on

12-12-2023

12:56 PM

by

![]() Tristan

Tristan

Introduction

Data modeling in Incorta involves creating physical schemas, tables, and joins between the tables. Data modeling activity ensures that the data model:

- Is consistent

- Is maintainable

- Has optimal load performance

- Returns correct data in the dashboard

Physical Schema Design

A schema is a collection of tables tied to a data source. While designing the schema the following are best practices:

- Keep the Schema names intuitive and simple, names are case sensitive

- Special characters and spaces are not allowed in a name

- For efficient memory utilization avoid table duplication across different schemas. If you have a table that needs to be duplicated because it plays multiple roles in a query, it is advisable to create table aliases.

- While creating or importing tables using the schema wizard, select only the required columns and spell out each column. For example, use “select col1, col2 from table” instead of “select * from table”. This ensures that the table load will work even if new fields got added to the underlying table after the table was initially saved.

- You can free up memory in a loaded table by setting the datatype of columns to Null. However, all dependent joins, formula columns, and dashboards will break as a result.

- You can group tables by business processes or dashboards in a schema to make it modular and easier to refresh and maintain. For example, you can create a schema with just the common tables which are needed to be joined by tables in other schemas.

- Refresh schedules can only be set at a schema level so it is important to put tables which need to be refreshed at a certain frequency in the same schema. If you have a set of tables to refresh every 10-15 min, it is not recommended to put them in the same schema as other large tables which need to be refreshed at a different schedule.

- A schema is loaded over two distinct phases; the source data extraction phase, and the loading phase. Where possible ensure that the tables have an incremental extract query to reduce the extract and load time.

- Joins and formula columns are refreshed after data is loaded so ensure that the formula column definitions are optimized and kept at a minimum to minimize the load time.

Tables

- Table names are case sensitive.

- Define Primary keys appropriately:

- Keys allows upsert operation during incremental extract and allows correct joins to the child tables. (Note that Keys cannot be defined for Double data type columns).

- If keys are not defined then -

- If Incremental option is off then loads are always full.

- If Incremental option is on then rows will always be appended at every load as there are no keys to do an update.

- Since tables can have the same column names you can modify the column label to reduce ambiguity. For example 'Approver Name' versus just Name.

- Use integer instead of Long as data type where possible to reduce the memory required.

- You can create formula columns with removeTime() function to create date columns on any Timestamp fields.

- UNION operations can be achieved by defining multiple data sources (SQL icon) for a table.

- There is a limitation on character fields of 2 Billion, in these cases the field should be defined as TEXT.

- Prior to adding a formula column in a table test it first by creating a formula column in a dashboard. Once it works then you can add it to the schema. For eg using a Lookup() function in a formula field.

- Tables by default are memory optimized meaning the parquet files will be loaded to memory, if you do want data to be only in Parquet files then turn off the memory optimized button. This is usually done for tables with huge # of rows which you do not want to be loaded in memory for querying via dashboards but only needs to be processed in a MV.

Aliases

- An Alias refers to a table by an alternate name and can be created for tables in the same or other schemas. They are needed when a child table has multiple fields which are foreign keys to the same parent table. For example, the Orders table has bill_to_address and ship_to_address fields which point to the address table. In this case we will create an Alias of the Address table, name it Ship_Address and join that to the child table on the ship_to_address field and use the bill_to_address field to join to Address table. In Analytics parlance Alias are an example of role playing dimensions. Keep in mind -

- Aliases should also be used to avoid cyclic joins. We should not have cycles in a schema as Incorta will pick a path randomly and it may return incorrect data.

- Aliases inherit security filters from the Original table.

- Aliases inherit joins from the original table (to other tables), unless a different join (on a different column) has been specifically created using the Alias.

- With Aliases we can change the join column to another table

Joins

- All joins in Incorta are Left outer joins by default. This implies that the records in the child table (for example Sales) are not removed from the query if they don’t have a corresponding record in the parent table (for example customer_id is null, or Sales.customer_id does not exist in Customer table). If the report is grouping by a field from the parent table (for example CustomerName), those records will show under a NULL bucket (CustomerName = NULL).

- Ensure that a child is joined on the key columns of a Parent table

- An example of such a join is Sales table joining to the Customer table. There are typically multiple Sales records for one customer. But there is one record for every customer in the Customer table. This join is created in Incorta by selecting Sales as the child table (left side in the Join screen), and Customer as the parent table (right side in the Join screen.)

- To transform a join into an inner join, you need to add a filter on the parent column to be not null in the insight itself. For example (Customer.CustomerKey not null.)

- To transform a join into a full outer join, for example you want to see customers with no Sales records, then you can click on the dimension and turn on the Show Empty Group button. Notice that you need to turn on this button for every dimension on which you want to see a right outer join separately.

- Incorta allows you to create a join between two tables in different schemas. The join will show under both schemas (child and parent schema). But you can only edit it from the child schema.

- Please ensure that you do not have a formula column join to table in a different schema.

- Verify that there are no cyclic joins (Multiple paths between any two tables, this causes Incorta to select a random path which may cause incorrect data to be returned). Create Aliases to break the cycles.

- Joins are updated every time the schema is refreshed. If the join is between two tables in different schemas, it is refreshed every time each one of the two schemas is refreshed.

- Make sure compound joins are created as a single compound join instead of splitting them into individual joins. For example. Table1.c1 = table2.c1 and table1.c2 = table2.c2

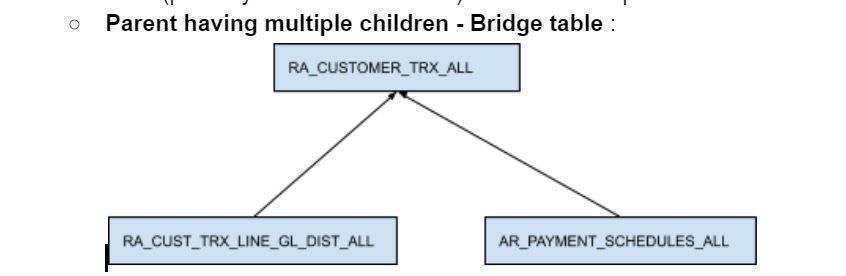

- If the relationship between two tables is M:M then we need to create a bridge table (possibly a materialized view ) The next example shows how to model it:

In the M:M (bridge table)) scenario there is an intersection table between two tables, in Incorta you can think of this as a common parent for two child tables. We can create an aggregate report on these three tables but creating a list report with all the three tables will not work .

- First option is to split them into two insights in the same dashboard and then using the common parent to filter data.

- Second option is to create a bridge table using a MV with the ids for both the child tables and using that to join these tables as parents.

Link to Video: https://www.youtube.com/watch?v=FJgFiDvToUY

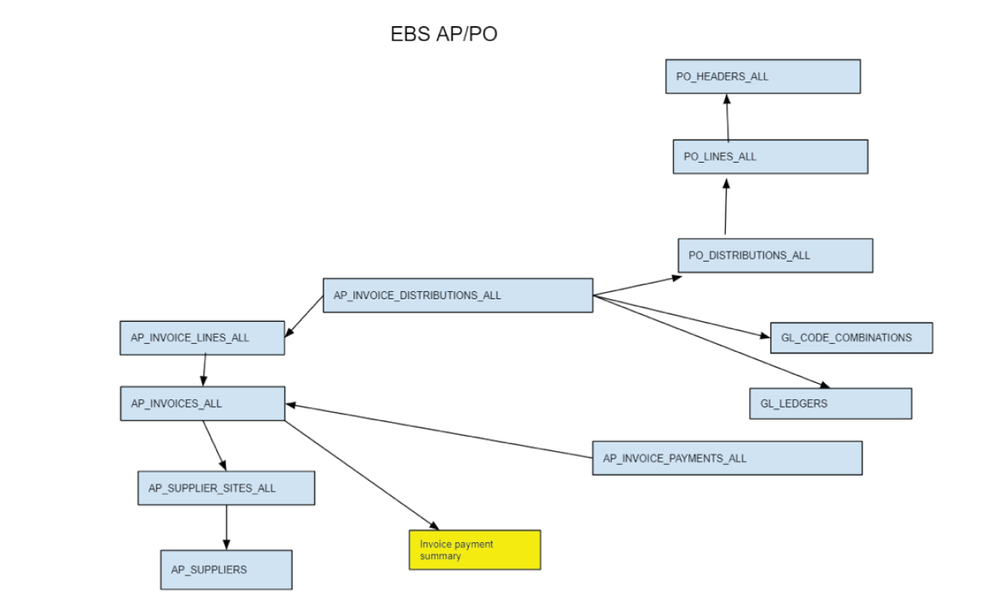

- Another modelling usecase:

In the model above, notice that AP_INVOICE_PAYMENTS_ALL is a child of AP_INVOICES_ALL and there is no direct path to navigate from AP_INVOICE_DISTRIBUTIONS_ALL to that. If we want to report payment amount with Invoice number and PO number, it is not possible to do that in one list report in Incorta.

In this case, we would create a slim Materialized View (shown in yellow) that has the invoice_id (key) and the aggregated payment amount from the payments table. Then make that as the parent table of AP_INVOICES_ALL

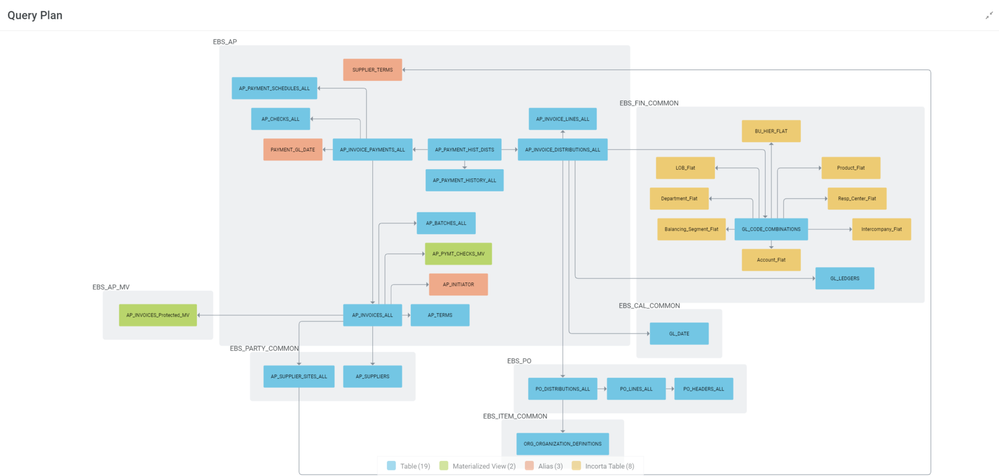

Sample Incorta Query Plan for EBS Procure To Pay