- Incorta Community

- Knowledge

- Administration Knowledgebase

- Memory Sizing for Incorta 4

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Article History

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

on 04-27-2022 10:20 AM

Introduction

Memory Sizing Rules of Thumb (for 4.1, 4.2, 4.3, 4.4 only)

Most of the "rules of thumb" we apply for sizing our Incorta environment depend on the amount of compressed data we expect in-memory, which we call "x" below. For now, the easiest way to calculate x is to use the number showing in the Incorta Schema page (or the sum for all Tenants/Schemas). The list below enumerates the main rules we apply when sizing our Incorta hardware.

- The amount of memory (i.e. RAM) required by the loader service should be roughly 2x the size of the compressed data in Incorta memory as reported by the Schema page in the platform. (For v4.3 and v4.4), this recommendation is actually 10% larger, or 2.2x). For example, if we have 100GB of data reported by the Incorta Schema page, we would want the loader service to have access to 200GB of RAM.

- The amount of RAM for the analytics service is a bit trickier as the analytics service only loads the columns required to render the dashboards or service the queries through the SQL interface. Thus, if we have 5000 columns across all of the tables in our schemas, yet our dashboards only employ 500 of the columns for display, filters, calculations, etc., then we would only require 10% of the memory required by the loader service. We are typically conservative here, however, as dashboards and query profiles can change over time. For that reason, we typically suggest that the analytics service should have about 1x the memory of the size of the compressed data (or 50% of the memory available to it as compared to the loader service).

- The amount of disk required for the shared storage layer should be at least 10x the size.

- The number of cores for the loader service is a function of how many tables and schemas load in parallel and we typically recommend at least 16 cores for the server.

- The number of cores for the analytics service is a function of peak end-user concurrency and average number of insights per dashboard and we typically recommend at least 16 cores for the server.

- For both the analytics service and the loader service, through the CMC, set the on-heap memory to 25% of the memory allocated to the service and set the off-heap memory to 75% of the memory allocated to the service.

- Leave about 10GB of RAM for the server operating system when configuring your hardware or VMs.

On-heap vs Off-heap

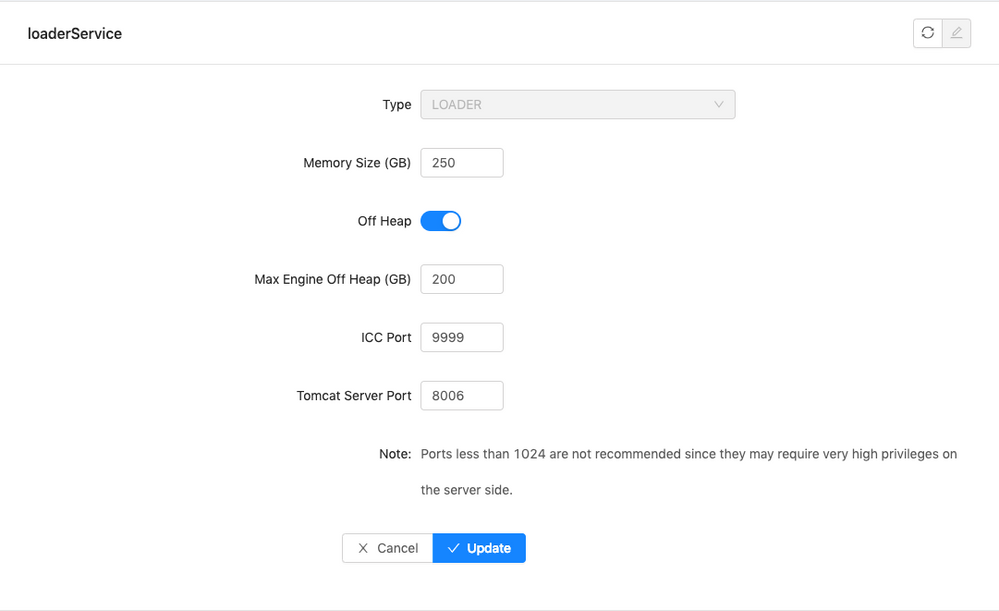

Incorta v4 supports both on-heap and off-heap memory management for both the analytics and the loader service. On-heap memory refers to memory managed inside the java process itself, or the java "heap" space. Off-heap memory refers to memory managed outside the java process. As java processes, the analytics service and loader service both have settings in the CMC that control the on-heap usage vs. the off-heap usage. Please see the screenshot below from the CMC.

As a rule of thumb, it is a good practice to keep about 70-75% of the memory offered to Incorta off-heap. This keeps the amount of memory in the java process for the loader and analytics services (aka. the on-heap memory) smaller which makes java maintenance activities like garbage collection less intrusive.

If we revisit the concept of "x" again from the "Rules of Thumb" section above, we are ultimately interested in the amount of compressed data we expect in the Incorta storage layer (aka. "x"). Based on the rules of thumb above, we will plan for 2x memory for the loader service (2.2x for v4.3) and 1x memory for the analytics service. The "Memory Size" setting controls the on-heap memory size and this should be 20-25% of the 2x or 1x memory size, for the loader and analytics service respectively. The off-heap component should be set to 70-75% of the 2x or 1x memory size, for the loader and analytics service respectively.

Keep in mind these settings only control the amount of memory allocated to the Incorta services. For example, you may have a 1TB RAM server and only choose to allocate 200GB to the loader service and 100GB to the analytics service.