- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Article History

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

on

03-02-2022

09:32 AM

- edited on

10-04-2022

07:41 AM

by

KailaT

![]()

Introduction

Testing applications for deployment to production is a topic that is well understood and for which there are any number of resources available for determining what the accepted best practices are. It is likely that your organization has a documented process for it. As such, this article will focus not on the general practices of testing but rather on best practices related specifically to Incorta.

What you should know before reading this article

We recommend that you be familiar with these Incorta concepts before exploring this topic further.

Applies to

These concepts apply to all releases of Incorta.

Let’s Go

There are lots of different types of testing to consider when deploying software. We will touch on the following types, focusing on Incorta specifics:

- Data Validation

- Unit Testing

- Dashboard Functional Testing

- Performance Testing

- User Acceptance Testing (UAT)

- Smoke Testing

- Regression Testing

- Testing Automation

Data Validation

Bringing data into Incorta is one of the first and most important steps to getting value out of your investment in Incorta. Depending on the amount of data in your data source(s) you may choose to bring in a full load of all the data available or you may choose to limit it. If you choose to limit your data, make sure that you understand exactly what the rules are for bringing the data in and how many records to expect.

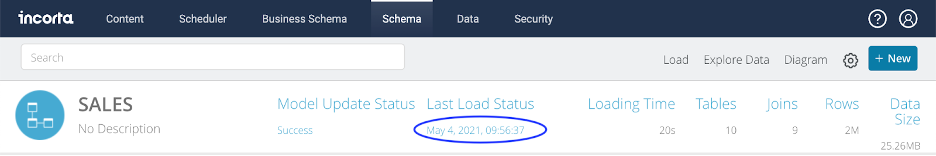

The most basic data check is to validate that the number of rows loaded into Incorta for each table matches what is expected. To do this, select the schema you are validating from the schema tab and then click on the timestamp just below the Last Load Status boilerplate.

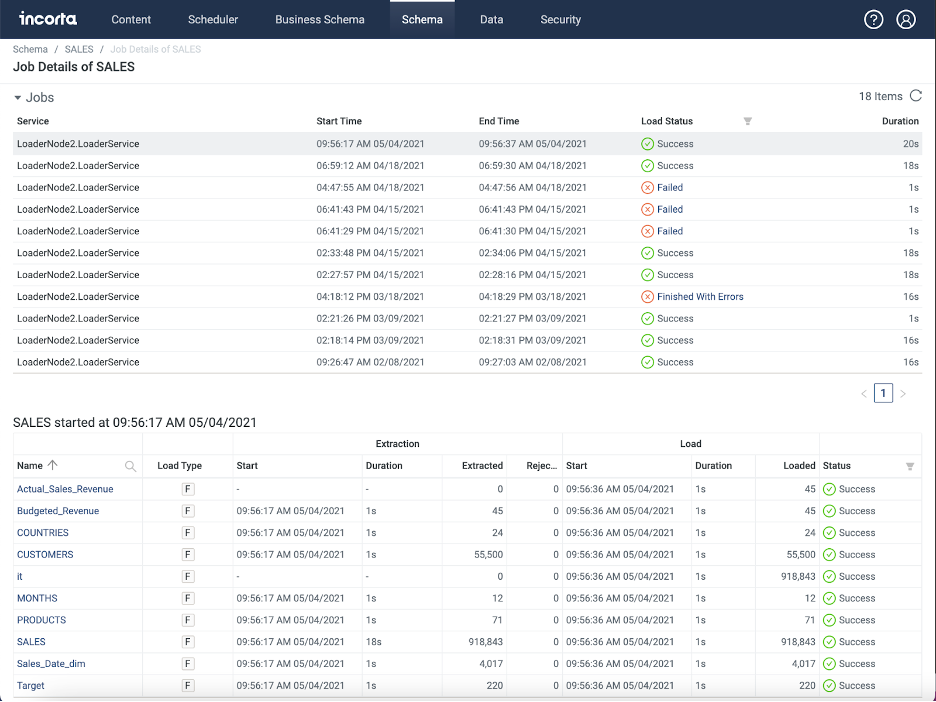

This will bring you to the Job Details screen where you can review the history of loads for the schema. When you select the load that you are verifying in the first section you will then see statistics on how many records were extracted, loaded and rejected for each object in the schema.

You can also create listing table insights for the tables/objects that you are checking and verify record counts on dashboards created for the purpose. This is a useful exercise in any case because the next part of data validation is to check that the data in each column is correct.

When validating the data, it is often easiest to pick a subset of the data because validating every data element when a table contains millions of rows is not possible. Picking a period of time, like a month or even just a day, is a good option but whatever type of subset works for your data is fine.

We suggest checking aggregations for number data type columns to make sure that they match your source across the subset of data that you have chosen. You can even aggregate ID columns if they are numbers. Along with row count, this should give you pretty good confidence that what you have in Incorta is correct. That said, it is also a good idea to pick a number of different types of records that represent typical data and validate data element by data element that you see exactly what is expected.

In addition to validating the columns that pull directly through from your source, it is also important to check the validity of any formula columns that are created at the physical table level.

Unit Testing

The resource who is building a dashboard should unit test their work to validate that it meets all requirements. We recommend that each of the following be checked:

- Right columns of data

- Right formula columns

- Right ordering of columns

- Right chart type

- Right values

- Individual records

- In aggregate

- Right filtering

- Filters available

- Applied filters are correct

- Right security permissions

- Row level

- Column Level (Data Masking)

- Access to the dashboard

- Location of dashboard

- Data Security

Dashboard Functional Testing

Ultimately, dashboards are the deliverables and the dashboards you have built need to be run through their paces to validate that they effectively answer the questions that they are meant to answer. SMEs and/or end users should work through real work scenarios to validate that the dashboards are effective tools. To a very real degree, how you approach this sort of testing depends a good deal on what you are using the dashboards for and what your delivery philosophy is.

Know Your Audience

Incorta is great at allowing you to quickly whip up reports/Insights on a dashboard given access to the right data. If you are just trying to get to an answer for yourself or are only planning to share with a limited group who do not need polish, then you do not need to spend a lot of time making sure that your dashboards are “production ready”. You can get your answers, validate that they are right for the use cases you are interested in, and then share them out for comment or knowledge exchange.

If, however, you are preparing content that is meant to become standard for use by particular work functions, you will want to prepare your dashboards such that they are polished and fully address the needs of your audience. In other words, you will want the dashboards to be “production ready”.

Considerations

Here are a few things to consider for functional testing:

- Use Cases / User Stories - the use cases or user stories that your dashboards are meant to address should be well understood by both the developers and testers. Documentation of them can be as simple as a user story statement (As a “role”, I need to “do x” so that “y can be achieved”). That said, to the degree that it is needed in order to prevent rework, some use cases may need more documentation. Balance the time spent on documentation against the detail of specifications needed to communicate the requirement well enough to build an effective dashboard.

- Test Cases - similar to user cases, spend the amount of time documenting test cases needed for your testers to effectively test. Test cases should cover:

- Validation of each insight on a dashboard for data accuracy and effectiveness in communicating its message. They should also fit into the flow of how users will use Incorta in their daily work. That is, they should test entire processes, not just individual dashboards.

- Layout of content: Overall is the dashboard organized effectively from top to bottom on each tab and from tab to tab?

- Is there consistency from insight to insight in chart type, in colors used, in units etc…?

- Navigation with regards to drilling or links.

- Filtering: Are the right filters available? Are there too many filters? Is the dashboard properly pre-filtered if needed? Are insight applied filters properly defined?

- Security: If applicable, is row level security properly applied? Column level / Data masking?

- Training - are the testers trained up well enough on Incorta to effectively test? Do they know the use cases / users stories so that they can effectively test?

- Incorta’s Learn and Community sites offer lots of Incorta specific resources for learning Incorta

- It also makes sense to offer your own custom training materials that cover your processes and use cases

- Security - is the content in the right place such that those who should be able to access it can and those who should not be able to cannot? Are the right privileges assigned to the right people? If applicable, is record level security working? Is data masking/column security working if used?

Performance Testing

Incorta is inherently fast but that does not mean that undersized hardware or poor design cannot result in performance issues. Keeping your eye on performance and specifically testing for performance makes sense especially as your Incorta footprint grows.

Best practice number one is to have a non-prod environment that mirrors your production environment so that as you work on new initiatives you can get a preview of how performance will be before you deploy to Production. This will also give you a place to troubleshoot issues without impacting your production users.

Incorta Tools for Tracking Performance

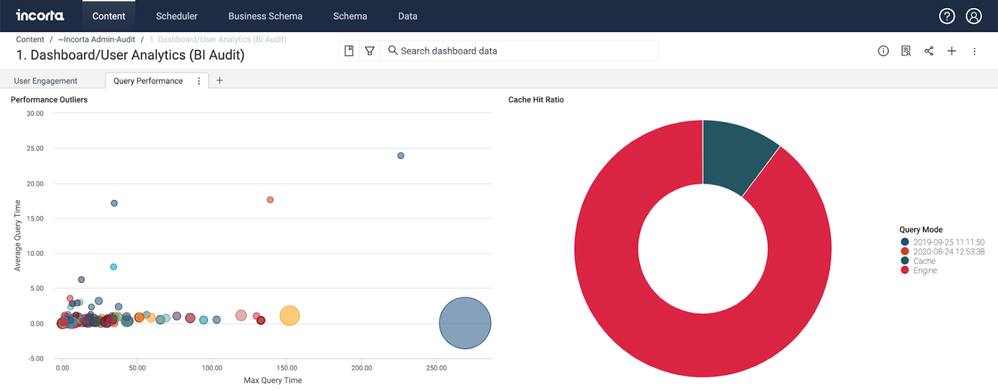

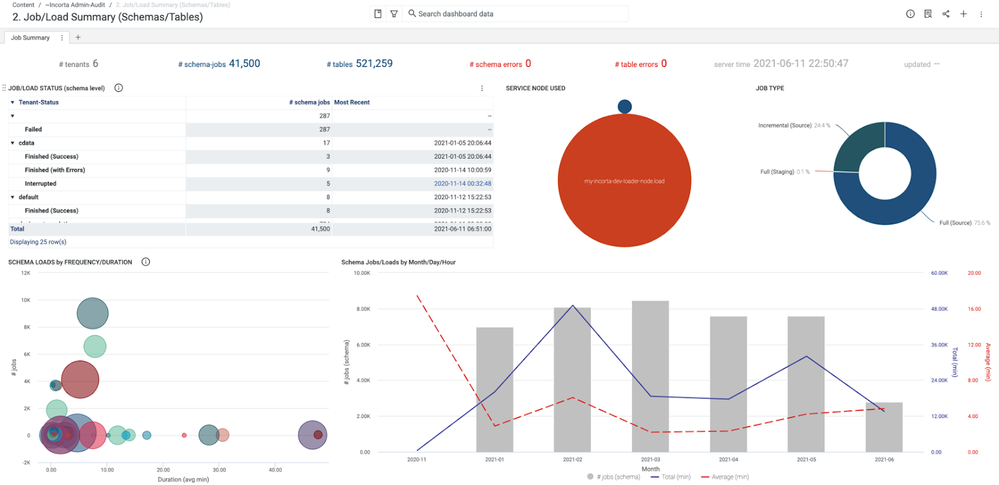

Every implementation of Incorta should include the Metadata Dashboards. These dashboards provide all sorts of information about how Incorta is operating including information about how your users are interacting with Incorta, lineage information and how Incorta is performing.

The 1. Dashboard/User Analytics (BI Audit) dashboard provides information about query performance over time and can be a valuable tool for identifying outliers.

Both the 2. Job/Load Summary (Schemas/Tables) dashboard and the 3. Job Execution Analytics (with one day) dashboard provide good information about your loader jobs.

You can also review the status of load jobs for a particular schema from the Load Job Viewer screen.

Testing

For loader jobs, as soon as you turn on your scheduled load jobs you will begin to build a baseline for performance. By reviewing the history, you will soon be able to identify jobs that need to be reviewed. It is also possible to set up alerts that will send notifications for failed or long running jobs.

For dashboard/analytics performance testing, your options are manual testing or automated testing. Manual testing is simple and simulates real usage very well. You will want enough users to simulate a representative load on Incorta to log on and start using the system all at once for some representative period of time. If you know that there are certain circumstances or times of day that will have heavier usage on the system, for example when loads are running, it is a good idea to test under those circumstances. Users will soon be able to tell if there is any performance degradation and you can review the metadata dashboards for the activity that occurred during the test. See below for more information about automated testing.

User Acceptance Testing

User Acceptance Testing (UAT) is very similar to Functional Testing except that it is performed by end users instead of by SMEs. The same considerations around training and reporting results should be taken into consideration. UAT is normally the final hurdle to being able to go live.

It is also important to have a process for taking feedback. Your testers may have suggestions for changes or opinions on the effectiveness of the design. Unless these requests are showstoppers to going live, it is usually best to capture the feedback for the next iteration of updates instead of getting into another development cycle before you have released your changes to production. Fortunately with Incorta, getting to and through the next iteration can be pretty quick.

Smoke Test

After every deployment to production, it is useful to have a short list of tests that you run to verify that the deployment was successful and that Incorta is running as expected. This is called a Smoke Test and it should take no more than twenty minutes to complete.

These are useful components to include in your Smoke Test:

- Check that the new content and/or data model that was migrated is where it is expected and that it works as expected

- Run a load job (probably an incremental job) and verify that it successfully completes in the expected amount of time.

- Log in as several users who have representative privileges. This will allow you to cover content across your user base and check security.

- Run several popular pre-existing dashboards to verify that they are displaying with the expected results.

- Make sure that performance is normal

- If you have built dashboards with third party tools, like Power BI or Tableau, on top of Incorta, check a few to make sure that they run as expected.

If the Smoke Test turns up an issue, get the right parties engaged to fix it quickly. Your internal Support team and Incorta Support should be notified (submit a ticket to Incorta Support) about deployments and upgrades so that they can be on standby to assist if something does not go well. If a Smoke Test is completed successfully, you can send out the appropriate announcement and open the instance up to end users.

Regression testing

After your initial go live, it is important to validate that each successive deployment of changes to your Production environment has no effect on the existing content that is already working. For Incorta, this does not normally apply if the new content is confined to dashboards on top of the existing data model unless there is an enormous uptick in usage suddenly. It is mainly necessary when there are upgrades, changes to the data model or new configurations related to scheduling or security.

If manually performing regression testing, the easiest way to make sure that you consistently perform the tests that you need is to create a library of regression test scripts. These should include instructions for how to perform each test, expected outcomes and instructions for how to report issues if things do not work as expected.

You want to avoid having your regression testing become burdensome but you will probably have to incorporate new tests into your suite of regression tests over time as content is added. You may also need to modify tests from time to time as things change.

Automation

When it comes to automation, keep in mind that Incorta runs on a browser. If you already have a tool for testing automation that you use with other browser based applications, there is a good chance that you can use it with Incorta to test the front end.

There are no officially supported Incorta tools for automating your testing, but if you do not have your own tool, talk with your Incorta Customer Success representative to see if there is any way that we can help you.