- Incorta Community

- Discussions

- Administrative Discussions

- What is the procedure if a model update is stuck a...

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Mute

- Printer Friendly Page

What is the procedure if a model update is stuck at "Sync"?

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-07-2022 05:02 PM

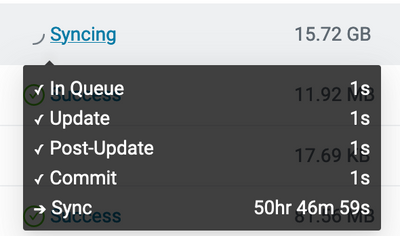

We have a schema that has been unable to complete its update for over 50 hours. We normally load this schema twice daily. It takes about 1.5 hours normally. Here you can see the status:

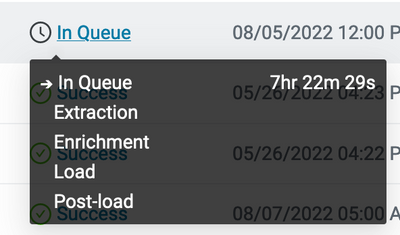

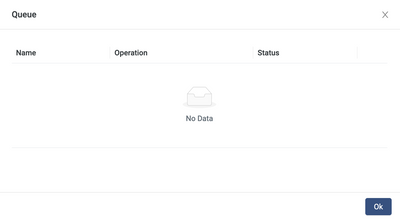

There have been no changes in the schema design that I'm aware of. Prior to Friday it ran successfully every time. If we click on "In Queue" to look for blocking jobs, the list is empty:

So how do we troubleshoot this? Is there anything in particular that would prevent Incorta from syncing the metadata in the Commit and Sync stage of a job? It's clear that it will never finish, since one phase of the job has already taken 50x longer than the whole job normally takes. Sync normally takes a trivial amount of time.

I have reviewed the documentation here: https://docs.incorta.com/cloud/references-data-ingestion-and-loading and several related parts of the docs. I could not find any guidance on how to return to operational status in this situation.

I've seen some similar threads in Spark forums/ developer boards with the job executor getting stuck on the last step. Any insights would be greatly appreciated.

- Labels:

-

2022.2

-

Monitoring

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-07-2022 05:11 PM

Additional note... other schemas are able to load and sync just fine. Unfortunately the one that's locked is the most-used schema, as might be expected.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

08-08-2022 12:06 AM

The Sync phase is for making the data available in the analytics service for reporting after a schema refresh.

After the data is refreshed from a a scheduled or an ad hoc data load job, the data has to be available. Incorta uses the Sync phase to sync between the nodes, typically the analytic service and the loader service. When multi-node architecture is being used, the Sync phase cover the sync among all nodes including analytic nodes and loader nodes.

You may try the following:

- Restart the Analytics services node and run an incremental load.

- Check if there is any long running process, such as a data download from a huge insight or a scheduled dashboard delivery with a large data. This can be done by checking the Incorta metadata dashboard if you have deployed it, or checking the audit files within the audit folder under data folder.

- Restart all Incorta nodes (cluster) and run the incremental load job again

- File a ticket with Incorta support and run the data collection script before shutdown and restart nodes

- Work with the incorta support and check the zookeeper log.

To get the alert for long running schema job, you may consider deploying an data alert that runs against the incorta metadata job history.

You may run into an issue that is addressed by a later Incorta release. Here is the release node for Incorta 5.2.

It mentioned : Interrupt long-running dashboards that block sync processes

Hope these help

- API to Reset Password for a Single Incorta “Internal (Single)” User in a Tenant in Administrative Discussions

- Failed to get lastCheckpoint file in Administrative Discussions

- Incremental Load query in Data & Schema Discussions

- Rolling 3 month sum in business schema in Dashboards & Analytics Discussions

- Duplicating Schemas in Data & Schema Discussions