- Incorta Community

- Knowledge

- Administration Knowledgebase

- Disaster Recovery

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Article History

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

on

03-14-2022

03:13 PM

- edited on

03-15-2022

11:40 AM

by

![]() JoeM

JoeM

Introduction

The main objective of disaster recovery is to ensure that customers can respond to a disaster and minimize the effect on business operations. This is done by making sure that data and applications are restored to the pre-disaster functional state with little downtime.

This article describes how to set up and switch to the Disaster Recovery (DR) system in case of primary site failures.

What you should know before reading this article

This article requires an understanding of Incorta architecture and its various components. It is geared mostly towards Incorta Administrators who are responsible for installation and administration. In addition, understanding of various replication techniques involving shared storage and databases is required.

For more details on installation and administration of Incorta, please review guides at https://docs.incorta.com.

Applies to

This article applies to on-premise installations for all versions of Incorta. Disaster Recovery will be handled automatically for Incorta Cloud customers.

Let’s Go

An Incorta Analytics deployment consists of the following major components

- Incorta Cluster: It mainly consists of Loader and Analytic Services

- Shared Storage: It stores data ingested from various data sources

- Spark Cluster: Incorta integrates with spark to perform complex transformations and run queries on Parquet data

- Zookeeper Ensemble: Zookeeper cluster to coordinate various tasks within the Incorta cluster

- Metadata database: Stores core metadata information

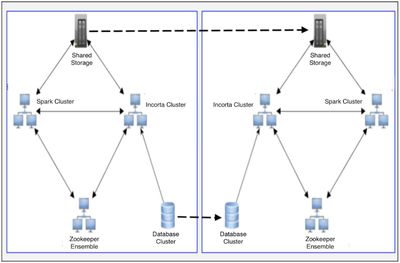

There are various solutions to enable Disaster Recovery. The following architecture uses duplication of the primary site architecture to a Disaster Recovery site.

The above diagram illustrates the replication of the metadata database and the contents of shared storage from the primary site to the disaster recovery site.

The Metadata database is a lightweight database and is used to hold dictionary information related to Incorta. It can be MySQL or Oracle. Shared storage is used to store the actual user data extracted from source systems.

In case of a total primary site failure, Incorta on the Disaster Recovery site should be started. Since the actual data and the metadata is replicated from the primary site to the DR site, Incorta will be up and running. If the replication process is near real-time then there will be no loss of data.

Install Incorta on DR Site

Install Incorta cluster on DR site exactly similar to the primary site. This means:

- Incorta version and the number of loader and analytics services are the same as the primary site

- The installation directory structure is the same

- Network CNAMES are the same as the primary for each of the loader and analytics nodes as this is needed for a seamless switch from Primary to DR and vice versa

Follow the above process for Spark and Zookeeper cluster as well.

Shut down the Incorta cluster and Zookeeper cluster on the disaster recovery site so that nothing is written accidentally to that environment by login to the environment. Put the metadata database in read-only mode.

Set up Replication

Set up replication for the following:

Incorta installation

To keep the Incorta version on both the primary and disaster recovery sites the same, replicate the whole installation directory structure from the primary to the disaster recovery site for all the nodes including CMC.

Also keep the Spark cluster and Zookeeper ensemble in sync using the same directory structure and CNAMEs. The Spark cluster can be maintained separately without replication as it is an external piece and can be plugged in when the disaster recovery site needs to be activated. To keep downtime to a minimum, it is preferred to have them replicated as well.

Shared Storage

Use appropriate technology provided by shared storage vendors to replicate the whole tenant directory from primary to disaster recovery site shared storage

Metadata Database

Replicate metadata database from primary site to disaster recovery site. Make sure that the metadata database on the disaster recovery site is in read-only mode and only replication is allowed.

Switch to Disaster Recovery Site

When the primary site goes down and you need to activate the disaster recovery site take the following steps:

Reverse the replication from the Disaster Recovery site to the primary for

- Incorta Installation directories

- Shared Storage

- Metadata database

Start Incorta on the Disaster Recovery site. If you are using a load balancer on top of the analytic services, make sure that the url is activated.

Other DR Solutions

The solution discussed above requires the cost of maintaining an identical infrastructure on the disaster recovery site but the benefit is that it reduces downtime significantly as both the environments are kept in sync through replication.

The other simple option is to keep a single node incorta installation on the disaster recovery site and keep an export of the tenant(s) and a backup of shared tenant storage on a regular basis from the primary site. During primary site failures, import the tenant into the disaster recovery installation, copy the parquet and snapshots from the shared tenant storage and run load from staging. The data will only be up to date as of the last backup taken. Any metadata changes done to schemas and dashboards will be lost since the last backup. Data can be brought back from the sources either by doing a full or incremental loads.

Related Material

| Subject | Kudos | Author | Latest Article |

|---|---|---|---|

| 0 |