- Incorta Community

- Knowledge

- Administration Knowledgebase

- Maintaining Incorta Health Over Time

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Article History

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

04-10-2024 04:26 PM - edited 04-30-2026 04:02 PM

- Introduction

- What you should know before reading this article

- Applies to

- Let’s Go

- The Need for Speed

- Prolonging the Life of your Current Hardware

- Loader

- Load Plans

- Load Only What You Need

- Run Full Loads

- MV Assistant

- Analytics

- Keep Memory Free

- Throttle SQLi

- Use MV’s to manage how much data is in memory

- Use Bookmarks

- Query Timeout

- Additional Measures

- Disk

- Adjust On Heap vs. Off Heap Memory Allocations

- Adjust Memory Between Services

- Observability Dashboards

- Conclusion

- Related Material

Introduction

One of Incorta’s great strengths is its ability to make huge amounts of data available and to deliver it fast so that users can get to insight quickly. This is only possible when the hardware that Incorta runs on, whether on premises or in the cloud, is sized properly. That is why sizing is an important exercise when you first get Incorta. But what if you have been running Incorta for a while and start to see some instances of performance degradation? How do you know whether your instance of Incorta needs tuning or whether it is time to upgrade your hardware?

This article explains what indicators to look for that signal it is time to upgrade your hardware and provides approaches to help you put off the need for a hardware upgrade.

What you should know before reading this article

Before exploring this topic further, we recommend that you be familiar with these Incorta concepts.

Applies to

These ideas apply regardless of the version of Incorta you are running.

Let’s Go

The Need for Speed

Incorta was built to allow you to load as much data as you want and to deliver it at amazing speeds. To that end, Incorta is constantly innovating to improve processing efficiency and adding new features that make Incorta more resilient, so that it can function even when resources start to max out. Keeping up with the latest releases will give you access to the newest features that will help you maximize the performance of your hardware footprint.

With that in mind, there are some things that you can monitor that will help you understand when it would be prudent to upsize your hardware.

You can view the CMC Monitor in the Cluster Management Console (CMC) on the Monitoring tab, where you will find graphs that show you how your resources are being used. It gives you the flexibility to view usage over different time ranges and gives you detail on what is occupying your resources. These dashboards can show you if your system is starting to bump up against its limits.

Prolonging the Life of your Current Hardware

As you add more data, load more frequently, and depend on Incorta more as a data hub, you may eventually hit the limits of your hardware and experience slower performance. This may come after a couple of years of use as the hardware sizing provided to you before initial implementation is usually modeled to support you for two years based on planned growth in data and users. You can start hitting limits sooner if you rapidly extend your footprint with more use cases. That said, the following adjustments can be made over time to keep Incorta running at its best before updating hardware. You will want to revisit these tactics regularly as your Incorta instance is dynamic.

Loader

Slowness of loads or loads failing is a common indicator that the hardware on which Incorta is running is starting to hit limits. The following tools & features can help the efficiency of your loads so that they run more smoothly and with fewer delays:

Load Plans

Starting with release 2023.1.0, Incorta introduced Load Plans to replace Schema Load schedules. The advantage of Load Plans over Schema Load schedules is that with Load Plans you eliminate the need to manually plan every schema. Instead, Incorta allows you to include many related schemas in a single Load Plan and to group them sequentially if needed. This eliminates the need for gaps in your schedule and allows Incorta to process more efficiently. Read this article for recommendations on how to take advantage of Load Plans best.

Load Only What You Need

If you want to optimize memory, a good place to start is by not loading unnecessary objects into it.

Because it is so easy to connect to sources and load data into Incorta, it is easy to bring in tables that you will never use. If this becomes a habit, you will impact the amount of room available for what is actually necessary. As a rule, only bring tables you will use into Incorta.

When you bring a new table into Incorta, it is best to be selective about the columns that you include. Avoid “Select *” and instead only select the columns that are needed. You can always add additional columns later if that becomes a necessity. If you do use “Select *” be aware that if columns are added to the source table at a later date, they will not automatically pull through into Incorta unless you resave the data set definition.

If you are using Materialized Views (MV) in multi step logic, check to see if any of the MVs can remain as scalable/non-optimized tables only. If the columns from an MV are not used in dashboards or accessed via SQLi, then you do not need to optimize them, which means that the data they contain will not be lifted into memory.

Run Full Loads

Incorta provides three options when loading data.

- Full Load: This type of load executes the SQL you define in your Data Set Query panel. It fully replaces the parquet file(s) that already exist for the table object. Full loads typically take the most time to process.

- Incremental Load: This load will execute the SQL you define in your Data Set Update Query panel. An incremental load does not touch any of the parquet that already exists for the table but rather creates a new subdirectory on disk with just the new data that is loaded.

- Staging Load: This type of load will not touch the source, but will instead load data from the existing table object parquet into memory.

Generally speaking, incremental loads run more quickly because they load less data and require less post-load processing. If, however, you schedule and run many incremental loads on schemas without occasionally running full loads, your load times can slow down because Incorta has to scan through many files in many subdirectories in order to put together a full picture of a table before pushing the data into memory. This would only happen after hundreds or even thousands of loads, but if you are scheduling a schema to run every couple of hours every day, you can reach this level of parquet defragmentation.

The easiest way to prevent this problem from surfacing is to run a full load before you start to see load performance deterioration. Choose a time that least impacts your users and schedule the full load to happen periodically, for example, once per week.

There may be reasons not to do a full load, however. You may have snapshot data that you cannot afford to delete, or you may have so much data that executing a full load takes a long time and can impact your business while it is running. In this situation, it makes sense to work with Incorta Support to find a way to defrag your parquet.

With that in mind, here are a couple of approaches that you could take to consolidate your parquet files to a minimum.

- Parquet Merge Tool

- Work with support to have them provide you with the tool and the instructions for how to work with it

- Pause the load schedule for the schema that contains the table you are looking to consolidate

- Run the merge tool

- Load the table from staging

- Restart the load schedule for the schema

- Use an MV to merge the parquet (It is a good idea to work with Incorta Support the first time you attempt this)

- Create a temporary schema and define an MV with the same name as the table for which you wish to merge parquet files. It should select all the data available from the defragmented parquet, effectively emulating a Full Load of the table. Filter the data with your SQL if you do not need it all. This will bring all the data into the minimum number of parquet files required to store it.

- Pause scheduled loads for the schema containing the table

- From the back end, backup and then delete the parquet files related to the original table.

- Copy the parquet created when you ran the MV into the folder for the original table

- From the UI, run a load from staging for the table

- Verify that things look good.

- If they do, drop the MV and delete its parquet from the backend

- Restart the load schedule

If you do scheduled full loads, consider incorporating logic into your data set definition to limit the amount of data that you keep. For example, if the business requirement is to maintain say three years worth of data, write the Full Load SQL to not load any data older than three years from the current date.

MV Assistant

One way for your Loader service to handle more loads more efficiently is to optimize the processing of Materialized Views. Although most of the MV processing work is done by Spark, the loader has to recognize dependencies as it orders loads which means that slow MV’s impact other loader work, not just the MV table itself. Incorta has an MV Assistant tool that was introduced in release 2023.11.0 that recommends settings for improved MV performance based on the configuration of your cluster. For more information about how to set up the MV Assistant see this article. Remember that it is important to consider the total Spark resources available when looking at the MV Assistant’s recommendations.

|

Recommendation |

Comments |

|

Use Load Plans |

Starting with 2023.1.0, Incorta introduced Load Plans, allowing you to schedule the load of multiple schemas in a single plan. They increase loader efficiency in a couple of ways:

|

|

Load only what you need into memory |

If you are looking to optimize memory, a good place to start is by not loading unnecessary objects into it.

|

|

MV Assistant |

The MV Assistant monitors the performance of all the Materialized Views in your cluster and provides recommendations on how to adjust the parameters of your MVs to optimize their performance. |

|

Run Appropriate Load types |

Use different load types depending on the circumstances

|

Analytics

If your analytics services start to bog down, you will hear about it from your end users. The following ideas can help you maximize the resources available to analytics.

Keep Memory Free

Presentation of your data in insights is key, but an important part of the user experience is the responsiveness of the UI. In Incorta, speed is tied to the columnar data loaded into memory. By only putting what is necessary into memory, you can optimize the experience for your end users.

Once you have defined a table, determine if it needs to be Performance Optimized. Performance Optimized tables are pushed into memory which is what you want if you are building dashboards based on the columns in the table. If you only ever access the table via an MV or via SQLi, then you can make the table non-optimized, which means it will not take up any memory but instead will only be stored as parquet.

Another good way to save memory is to apply a Load Filter if applicable. Load Filters limit the amount of data pushed into memory. They do not limit the amount of data loaded into parquet / scalable tables.

An adjunct to not using memory unnecessarily when creating new objects, is to occasionally scan for and remove objects that have been created but are unused in Incorta. See this article for tips on how to reclaim memory.

Throttle SQLi

Incorta has introduced a Cluster Management Console (CMC) setting that prevents runaway SQLi queries from taking down Incorta. In the CMC, on the Server Configuration sub-tab under the Cluster Configurations tab, choose the SQL Interface page.

Adjust the % of memory before throttling new connections settings based on your usage of Incorta.

The default value is 75 percent. You might want to raise the percentage if your users primarily access Incorta via the SQL Interface (SQLi). If you have many users who use Incorta dashboards and a few users who access Incorta data via SQLi, lower the percentage.

Use MV’s to manage how much data is in memory

It is possible to load very large tables into Incorta. A common large data example is IOT use cases where devices provide updates about themselves at regular short intervals. This behavior generates a lot of data, but you may not need all of the details. If you optimize all the data in these big tables, you will soon run out of memory. Instead of optimizing the detail, you can load the data as unoptimized and use MV’s to lift only the data you are interested into memory. Write MV’s against your unoptimized tables to:

- Filter the data down to only what you need to track

- For example, you might only pull in detailed records that contain error codes and ignore records that have “normal” statuses

- Aggregate the data into grains that are useful in reporting

- For example, you might aggregate by product code and day

Use Bookmarks

Users often find they have favorite configurations of their dashboards that include certain filter settings and possibly personalizing them. Getting to these favorite views takes several clicks, and requests are sent to the Analytics service with each click. Bookmarks were built for just this situation. They allow each user to save their favorite views and get to them either as their default when selecting the dashboard or with one click once they have entered the dashboard.

Setting up bookmarks speeds up users' access to the views they want and reduces the amount of work that the Analytics service needs to do with every reduced filter application.

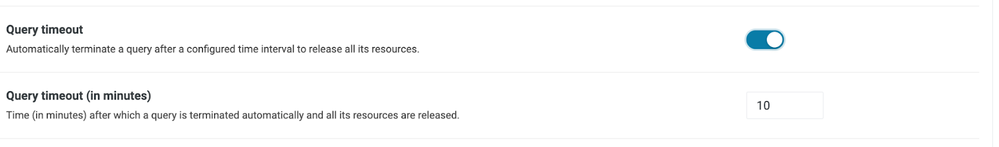

Query Timeout

Individual users can cause performance degradation issues for other Incorta front-end users if they launch very large requests. This sometimes happens when someone decides to download a huge amount of data from Incorta, but it can also happen if someone builds an ad hoc dashboard that is not properly filtered.

By activating the Query Timeout setting in the CMC, you can prevent runaway queries and thus limit their impact on the overall user population.

This setting does not prevent users from making these sorts of requests, but it limits their impact.

|

Recommendation |

Comments |

|

Keep Memory (RAM) Free |

|

|

Trim Unused Objects |

If you are looking to optimize memory, a good place to start is by not loading unnecessary objects into it. By being selective with regards to which tables you bring into Incorta and optimize you can clear space that will allow for faster loads and more growth over time. Beyond that, you can clean up objects that are unused to make more memory available |

|

Use MVs |

Load very large tables as unoptimized and then use MVs to raise only the data needed into memory.

|

|

Use Bookmarks |

Encourage users to set up and use bookmarks to get to their favorite dashboard views. They should set their most used bookmark for each dashboard up as the default. |

|

Allocate Resources |

Adjust your memory and CPU allocations from the CMC to match how Incorta is used. |

|

Set a Query Timeout |

Activate the Query timeout setting in the CMC to prevent runaway queries from affecting your Analytics users. |

Additional Measures

Focusing on the efficient use of your Loader and Analytics services is a prime consideration with regards to the health of your Incorta implementation, but there are other resources that you can manage as well.

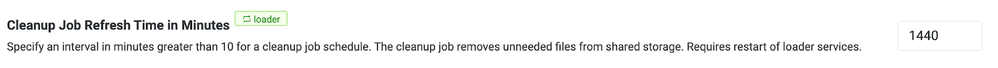

Disk

Activate the cleanup job from the CMC to remove unneeded files from shared storage. The files that are cleaned up are the result of configuration work done in Incorta and represent older versions of table objects or objects that have been created and then deleted. The cleanup mechanism can be run as frequently as every 10 minutes, but running it daily (every 1440 minutes) is probably sufficient to keep your disk in good shape.

Adjust On Heap vs. Off Heap Memory Allocations

Incorta allows you to define allocations for both on heap and off heap memory on both the Analytics and Loader services. The default recommendation is ~25% and ~75% respectively for both services. That said, allocations can be adjusted to possibly help the system perform better. Here are some reasons you might adjust:

- If you are seeing issues with the OS running out of resources and not seeing off heap memory being used fully, you may be able to allocate more memory to on heap

- Conversely, if you see off heap constantly in the red in the CMC (Nodes tab) and on heap not appearing to use much of its resources you can try allocating more resources to off heap and less to on heap

- Also, because you allocate both on heap and off heap in GB’s, check to see if all of the available GB’s have been allocated. If not, then you may have room to allocate more to either or both heaps.

Adjust Memory Between Services

Usage patterns for Loader vs. Analytics are unique for each Incorta installation based on how you use Incorta. You may use Incorta as a data hub such that your loader usage is heavy but your analytics usage is not. Or you may not load a lot of data into Incorta, relatively speaking, but still have a lot of users working with Incorta dashboards or dashboards on top of Incorta data every day, in which case your loader needs are low while your analytics needs are high.

A typical installation of Incorta balances memory usage between the Loader and the Analytics services, but if you are an on-premises customer, you may be able to reallocate memory and CPU resources between your services to better take advantage of the memory that you have.

It is also common for usage patterns to change over time, so you may need to revisit your allocations from time to time to make adjustments.

Observability Dashboards

Use the Observability Dashboards to help you analyze how Incorta is used and where there are opportunities for optimization. These dashboards are particularly useful in helping you identify load issues and opportunities for clean up.

|

Recommendation |

Comments |

|

Cleanup Job |

Activate the cleanup job to remove orphaned parquet from disk |

|

Adjust Memory |

Adjust Memory between the Analytics and Loader services to optimized based on your usage patterns |

|

Observability |

Use the Observability Dashboards to monitor the Healthe of your Incorta tenant and guide your maintenance activities |

Conclusion

There are a lot of ways to keep your Incorta implementation in top condition over time. If you actively follow these suggestions, you will be able to get the most for your investment in Incorta. Here are just a couple more thoughts for you.

First, the settings that are optimal for your Production cluster may not be optimal for your dev or test clusters. You may not have sized them the same way, they may not have the same amount of data or be connected to the same sources and they may not be used in the same way. You will have to take these factors into consideration as you apply the recommendations supplied here.

Second, Incorta will continue to innovate. There will be new features that make your experience better and make Incorta more resilient. Some of these updates will make Incorta more efficient still. Keeping up with the latest releases, documented on Docs and Community, is another way for you to maintain the health of your Incorta instances over time.

Finally, most of the recommendations made in this article are applicable to both on premises and Incorta Cloud customers, but if you are on premises and looking to reduce some of the headache of maintaining your Incorta infrastructure, take another look at Incorta Cloud. It offers efficiencies beyond what can be achieved on premises and always has the newest features first.