- Incorta Community

- Knowledge

- Data & Schemas Knowledgebase

- Data Engineering & Enrichment

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

- Article History

- Subscribe to RSS Feed

- Mark as New

- Mark as Read

- Bookmark

- Subscribe

- Printer Friendly Page

- Report Inappropriate Content

on

03-14-2022

01:58 PM

- edited on

06-06-2023

12:20 PM

by

![]() Tristan

Tristan

Introduction

When designing your data model in Incorta, the first step is typically to bring in all of the raw tables from each data source. All tables from each data source can be brought in and loaded without any transformations being applied or any keys or joins defined. However, then the business of joining the data, both within the same schema and across schemas (including from disparate data sources), is necessary and it is at this point also, that data aggregation and transformations can be understood and incorporated into the data modeling. The user has many choices to accomplish the data aggregation and transformation task of modeling and delivering content for the user’s reporting needs. This document will cover the most common options for Data Engineering at the table level.

What you should know before reading this article

We recommend that you be familiar with these Incorta concepts before exploring this topic further.

- Schema Manager

- About Tables

- Importing and Loading Data

- Incremental Loads

- Modeling Schemas, Tables and Joins

- Creating Materialized Views using Pyspark

Applies to

These concepts apply to all releases of Incorta.

Let’s Go

Now let's dive into some detail on how to best take advantage of the data enrichment tools that Incorta offers.

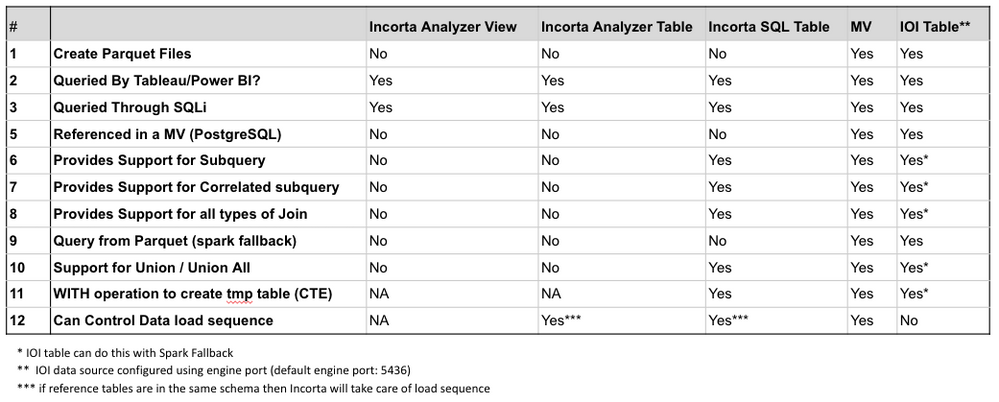

Summary of Table Types & When to Use

Below is a summary for your reference of the different choices for Incorta Table creation and key technical considerations.

More Depth

Let's get into deeper depth on how and when to use these different types of Incorta objects.

Materialized Views

Materialized Views (MVs) are one of the most commonly used methods for creating derived content in Incorta. Materialized views utilize Apache Spark technology as well as capabilities of Spark SQL, PostgreSQL, Notebooks, Python, Scala and R technologies to render a vast and almost unmatched set of possibilities for working with your data in Incorta. Incorta provides the materialized view feature to allow the transformation of extracted data from the source and reshaping of the data to use the transformed data in an Incorta model for analytics and reporting. There is virtually no limit to what is possible. We can read the data tables already loaded into Incorta by reading the data as a Spark dataframe and we can construct other dataframe objects based on business logic, which can then become an Incorta table that is used in an Incorta model, just like a regular Incorta table.

Using the Incorta Materialized View feature is totally optional and creating a Spark cluster does not have to be part of the Incorta deployment.

Here are some typical use cases that we can use to demonstrate best practices for using Materialized Views in Incorta.

Category 1: Produce pre-calculated joins that are not supported by regular Incorta joins

Incorta joins model binary relationships. Two tables are involved in a join and the join is directional, from a child table to a parent table. Every single record from the child table is pointing to zero or 1 record in the parent table. This means that each join from Parent to Child Record follows the SQL rules for a LEFT OUTER JOIN. If the Parent table has more than one possible join result, the user may get unexpected results as Incorta will choose only a single row from the parent.

Due to their binary nature, Incorta joins do not produce Cartesian products or multiple matching parent records per child record.

Distribution or allocation of the data

For example, a sales credit is to be distributed among sales based on predefined percentage of allocation, spreading a budget amount over periods, etc.

FA_BOOKS stores changes of the asset value overtime. Whenever a change occurs, a new record is created and the old record is updated with the effective end date to deactivate the record. The new record is inserted with an effective start date to indicate the starting point when the record should be considered active. A Materialize View (MV) could be used to place the book value into each time period so the snapshot of each period can be calculated and compared over time.

When ternary relationships are required since binary relationships are not sufficient to accurately describe the semantics of an association among three entities, we may consider using Materialized View or some modeling technique to resolve the problem.

Category 2: Create a result data set from tables having a Many to Many relationship

When the result set of two or more parent child relationships are used in a query, we can specify the base table in a query or at the business view level and the base table can be a driving table.

Example: We have 2 tables, Order and Invoice.

- 1 Order# may have 1 Invoice# (very common)

- 1 Order# may have >1 Invoice#s. Perhaps invoices are created for each shipping location identified in the Order. In this case Order is the Parent and Invoice is the Child

- 1 Invoice# may have >1 Order#s. Perhaps by agreement, this customer has requested to be billed monthly for Orders occurring that month, and in this case Invoice is the Parent and Order is the Child

In Incorta it is not possible to create a single join from parent to child that would support this scenario. Therefore, it is necessary to create a MV that contains ALL of the Order and Invoice Key pairings as it it is a cartesian result so there is a defined "path" from every Order to Invoice and every Invoice to Order. In the end Order is a Parent to the Child MV_Bridge by Order-Id and Invoice is similarly a Parent to MV_Bridge by Invoice_Id. This is a very common use of materialized views in Incorta

Category 3: Union of the data sets

A MV can be used to merge two or more data sets into one by using union or union all operations.

Use cases include:

- Support Common Dimension.

- Support data from multiple sources

- Merge archived data with recent data

- Merge the data from a legacy data warehouse with the data extracted from a new data source

Incorta supports multiple datasets when defining and populating a table defined in Incorta. However, in some cases, it may be desirable to extract the data from different sources in different schemas in Incorta and in different tables first, before consolidating them into a single object for querying. For example different sources may have different downtime. Also, the table structure and the value domain may be different and it requires transformation before merging them into a single table. Whatever logic can be defined via SQL or coding to extract data from multiple schemas and tables and shape the results into columns that may be merged into a single table can be accomplished by a materialized view.

Category 4: Pre-aggregated data

An MV can be created for building pre-aggregated data used in place of the detailed / transaction data for analytic reporting.

If you have too many grouping attributes in a dashboard insight, you may get an error to the effect of "The result is too large and exceeds the max group limit."

It is due to the run-time grouping processing that takes memory space and process time in the dashboard. It is not suitable for a large number of grouping attributes which has a large number of distinct values in each grouping attribute.

One of the workarounds is to produce the pre-aggregated results using the materialized view feature. Please note that using an Incorta Analyzer table to aggregate the data can result in the same constraint. Therefore, it is very common to pre-build a pre-aggregated table using a materialized view to resolve such cases.

Note: Incorta Runtime Business View that is available in Incorta 4.7.x or later can be considered as an alternative.

Incremental logic for Materialized View

For materialized views, it is recommended to avoid using the LAST_UPDATE_DATE from the source table running against "?" substitution parameter to represent the "last load date time" of the Materialize View. The source data LAST_UPDATE_DATE column may gave been successfully used to pull the data when the data was first extracted from the data source. However, the MV may start running sometime well after all underlying data was extracted. The question mark "?" is replaced with the last execution start time of the MV program itself, which will only start when the last extraction job finishes. There could be a gap in the next MV run to skip those records that were entered or updated in the source after the last extraction time but before the MV started.

- Use LAST_UPDATE_DATE against the MAX value of the LAST_UPDATE_DATE

- Add DH_UPDATE_TIME to all source tables and set the value to SYSDATE, and use them in the MV as the incremental filter

Incorta Analyzer Tables / Analyzer Business Views

An Incorta Analyzer Table is a feature that allows building an object that only exists in the Incorta engine (in memory). It behaves like a regular Incorta table that can be used in defining business views and can be used in the Incorta Analyzer for creating dashboards and insights.

An Incorta Analyzer Table can be created via the user interface that is very similar to the UI for building an aggregate table insight in a dashboard. After it is created, the resulting Incorta Analyzer Table can join with other regular Incorta tables.

Incorta Table is similar to Materialized view in that it is derived from other already extracted regular Incorta tables. The data is not directly extracted from the data source. However, unlike Materialized View, it is not necessary to write SQL or pyspark code for generating Incorta tables. An Incorta Analyzer Table is not materialized on the disk in parquet. When the Incorta services are restarted, Incorta Analyzer Tables will need to be reloaded to repopulate them in memory. Further, Incorta Analyzer Tables can only be loaded as a full load, so if the data sets are large, there is no ability to implement incremental loading for these tables. These considerations may impact the decision for using this type of table.

Comparing to Analyzer Business Views: The creation of a business view using the analyzer user interface in exactly the same way as an Incorta Analyzer table is possible. In fact these object will behave identically to the user when creating a dashboard or insight. The differences are:

| Function | Analyzer Table | Business Analyzer View |

| Creation | Created in Analyzer UI | Created in Analyzer UI |

| Runtime | Full Load with Schema | Run at each execution |

| Persistance | In memory | Not persistent |

| Modeling | Can be included in Joins | Cannot be Joined |

Best Practice

- Incorta table should only use other tables from the same schema as the data source.

- Avoid using the Incorta Analyzer table feature for a large data set. Incorta tables are fully recalculated (full load) in Incorta in memory engine in each data load refresh job for the schema that the table belongs in.

Incorta SQL (Available beginning with Incorta version 5)

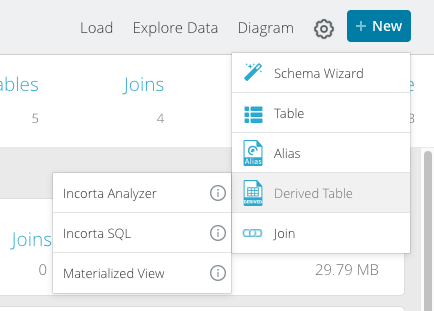

The latest release of Incorta version 5 supports the creation of a new type of derived table called the Incorta SQL table. From the Schema page, the new table is added in the same manner as other derived tables (Materialized views and Incorta Analyzer tables).

The new Incorta SQL table allows users to write very complex SQL against tables already existing within Incorta without using a Data Source JDBC connector. Because the SQL is handled by the Incorta Engine itself it can leverage the power and speed of Incorta's Direct Data Mapping. All versions / implementations of SQL support various levels of standard ANSI SQL. For example, spark SQL supports a subset, while PostgreSQL SQL supports a wider SQL syntax, and this is the reason that both of those 2 SQL formats are supported now with Materialized Views. The new Incorta SQL supports and even wider set of standard SQL for creating the most complex queries but also leveraging the power of the Incorta in memory DDM. Incorta SQL queries support:

- All types of joins

- Many-to-Many Joins

- Range Joins

- Union

- Minus

- Except

- Intersect

- Subqueries with correlated subqueries

- CTE and the use of temp tables within a single query

- Windowing and Rank functionality

- and more ...

Like Incorta Analytics tables, an Incorta SQL table is created as a table in a schema, and is loaded when the schema is loaded. The table is also persisted in memory only and is not written to parquet. Once it is created, the Incorta SQL table can be joined to other tables like any other table and can be used as a source for business views. It is anticipated that over time, this form of table will replace many of the SQL use cases that are handled by materialized views or incorta-over-incorta SQL tables that have been written utilizing the internal PostgreSQL jdbc connector. In fact, this latter form of table is discouraged not with the broader support of SQL in Materialized Views, and the newly added Incorta SQL table. There are a few limitations currently on the use of Incorta SQL tables which include:

- An Incorta SQL table can't query an Incorta Business View or Business Analyzer View

- An Incorta SQL table can't query an Incorta Analyzer Table

- An Incorta SQL table can't query another Incorta SQL table

- There is no Spark fallback support for Incorta SQL as there is for Materialized Views

- Like Incorta Analytics tables, incremental loading is not possible

- Incorta SQL tables are currently only supported for tables managed and loaded within a schema by the Loader Service, therefore, the SQLi interface from external sources that uses the PostgreSQL connector against ports 5436 (engine) and 5442 (spark), will not accept SQL that is handled by the new Incorta SQL engine functionality.

- In the current version of Incorta 5, this feature must be enabled as one of the Lab Features in the CMC

It is anticipated that over time and with new releases of Incorta, many of these limitations will be removed.

Union of Data

As has been discussed already in this document, often times one of the primary reasons to create a derived table is because there is a need to UNION one or more sets of data together into a unified view to accomplish a particular reporting need. There are multiple ways to accomplish this task in Incorta on data that has already been loaded into tables in Incorta using Spark via 2 flavors of SQL or from dataframes constructed in pyspark python code. However, it is also possible to UNION data from the same or disparate data sources into the same Incorta table as the data is extracted and loaded into Incorta. One of the most popular use cases for this is the creation of a common dimension table. What if there is a scenario where "Product" is defined in 3 separate systems (Oracle EBS, Salesforce, and an internal Data Warehouse) and it is desired to create a common Product table for reporting in Incorta that draws data from all three of these SQL sources. If we can assume that SQL can be written against EACH data source table to return the same number of columns, resulting in the same target column names, then a regular Incorta SQL table can accomplish this. The picture below shows how this would be accomplished.

After adding the table to the desired schema, click on the blue SQL icon at the top of the page:

- Select the Data Source from which the SQL will be run

- Write the SQL necessary to pull the columns from the source system that are desired for the common product table

- Hit Save

- By then clicking on the Plus Sign as shown above, you can then add more Data Sources one at a time following the same procedure.

In the case of the use case described above, one SQL could be written each against EBS, Salesforce, and DW to pull the fields necessary to populate the Product dimension table. When loading the data, Incorta will connect to each of the 3 data sources run the 3 SQL separately and then Union the results together.

Note: Just like any UNION that you might write manually via SQL, you will need to ensure that each of the separate SQL will return the same number of columns, and it is highly recommended that the columns all have the same name in each of the SQL from each data source. This is a very powerful and often underutilized feature of Incorta that allows data from disparate data sources to be merged on ingestion as long as the data makes sense to fit together without too much data transformation, or at least, transformations that cannot be accomplished as part of the SQL itself.